LLMs Don’t Replace Your Brain — They Align It

Every major shift in technology brings the same fear:

“Will this replace human judgment?” With LLMs, the fear is louder — and often misplaced. Because the most powerful use of LLMs in strategy is not automation. It’s alignment. LLMs don’t replace thinking. They reduce the friction that prevents thinking from staying aligned over time.

The Fear: Outsourcing Thinking to Machines

Skepticism around LLMs in strategy usually sounds like this:

- “AI shouldn’t make strategic decisions”

- “Context is too nuanced for machines”

- “Strategy requires human judgment”

All of that is correct. The mistake is assuming that LLMs are valuable only if they replace judgment. That’s not where their real power lies.

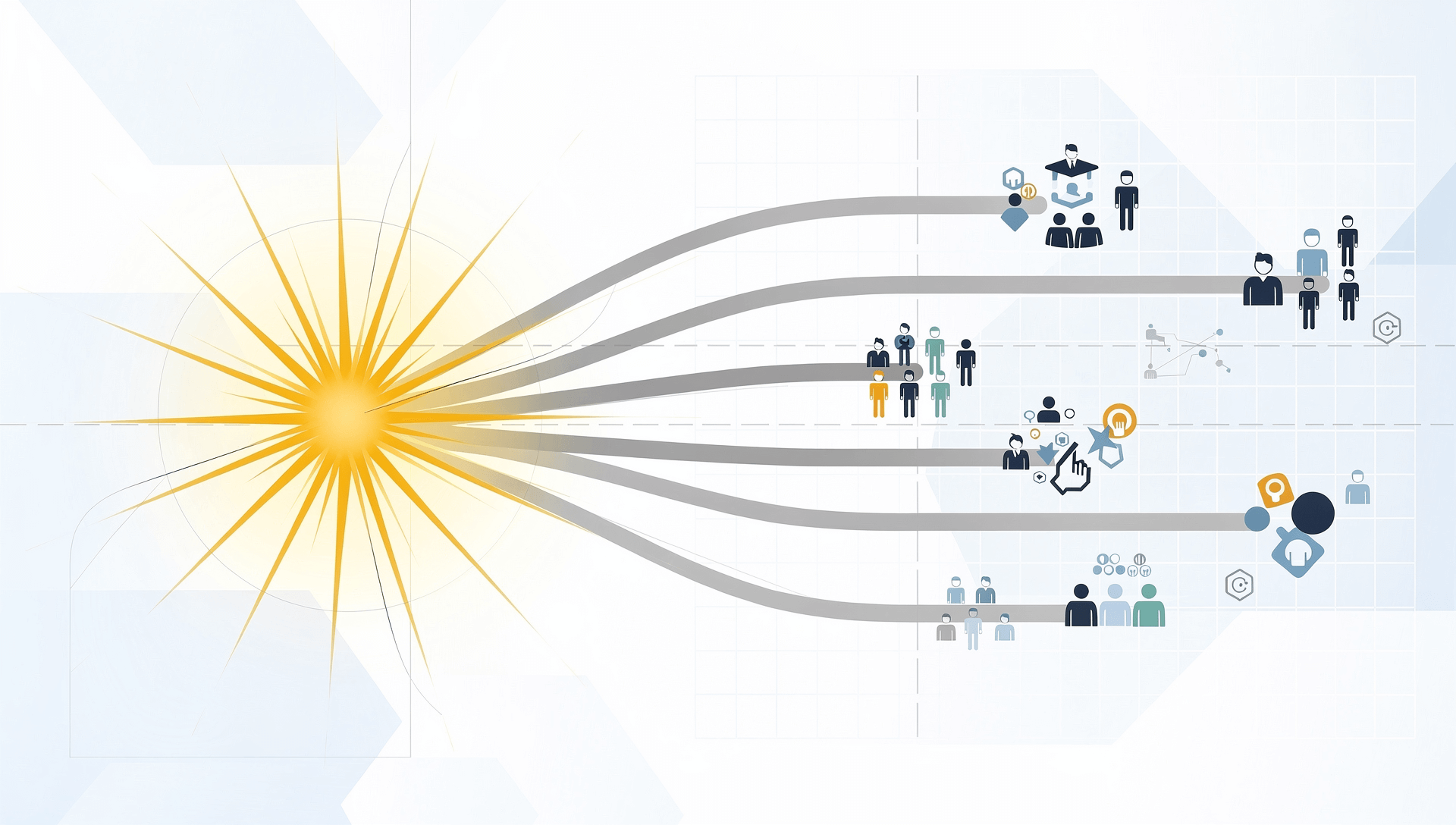

The Real Problem Isn’t Thinking — It’s Drift

Most organizations don’t struggle because leaders can’t think strategically. They struggle because:

- Strategy fragments across teams

- Decisions lose shared context

- Assumptions diverge quietly

- Alignment erodes under execution pressure

This isn’t a cognition problem. It’s an alignment problem. Humans think well — but they don’t stay aligned automatically.

What Alignment Actually Requires

Alignment is not agreement at a moment in time. It requires:

- Shared understanding of intent

- Consistent interpretation of priorities

- Traceability between decisions and objectives

- Continuity across time, teams, and change

This is hard to maintain manually — especially as organizations scale. No amount of intelligence fixes this by itself.

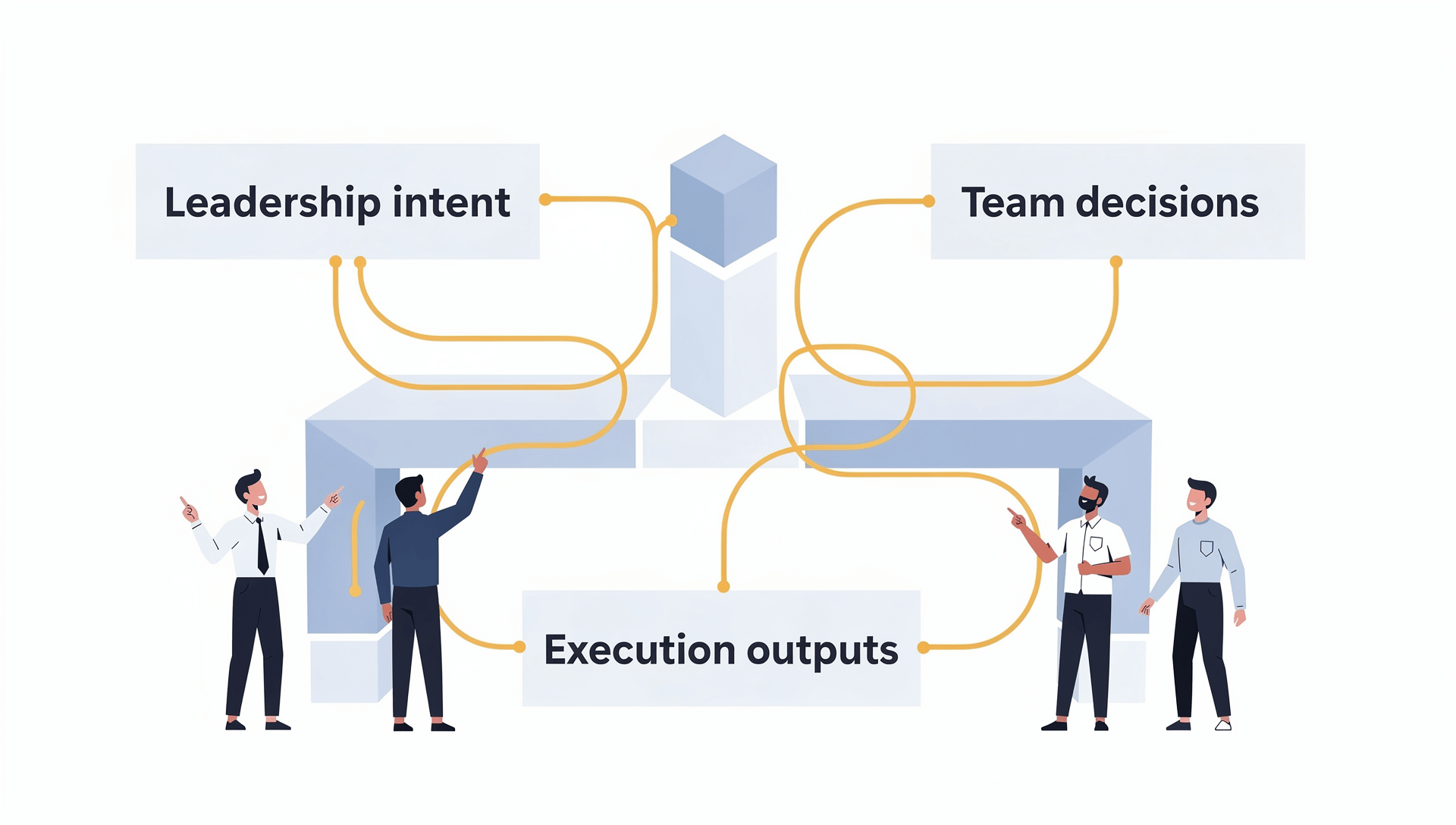

How LLMs Support Alignment (Without Owning Decisions)

LLMs are effective in strategy when they act as alignment infrastructure. They help by:

- Preserving shared context across conversations

- Making assumptions explicit instead of implicit

- Ensuring decisions reference the same strategic intent

- Reducing interpretation gaps between teams

- Keeping strategy coherent as inputs change

They don’t decide what to do. They ensure everyone understands why something is done — consistently.

Why This Matters More Than Speed or Automation

Most AI narratives focus on:

- Faster outputs

- Automated analysis

- Decision shortcuts

But in strategy, speed without alignment creates:

- Conflicting execution

- Fragile decisions

- Costly rework

LLMs add value by slowing down misalignment, not by speeding up decisions. That’s a more durable advantage.

How Priowise Uses LLMs to Align, Not Decide

At Priowise, LLMs are used to:

- Anchor decisions to strategic objectives

- Maintain shared context across stakeholders

- Surface inconsistencies before they become problems

- Support human judgment with continuity, not substitution

The final decision always belongs to people. LLMs ensure those decisions stay aligned — even as reality changes.

Strategy Doesn’t Need Less Thinking — It Needs Less Friction

The promise of LLMs is not intelligence replacement. It’s friction reduction:

- Between intent and execution

- Between leadership and teams

- Between past decisions and present reality

When alignment holds, strategy compounds. When it doesn’t, even brilliant thinking collapses.

LLMs Are Alignment Tools, Not Decision Engines

Used correctly, LLMs don’t threaten strategic thinking. They protect it. They make sure that:

- Strategy survives scale

- Intent survives delegation

- Decisions survive time

That’s not a replacement. That’s alignment.

Mini FAQ — LLMs and Strategic Alignment

Do LLMs make strategic decisions in Priowise?

No. Priowise uses LLMs to preserve context and alignment, not to replace human judgment.

How do LLMs improve alignment specifically?

They ensure decisions, objectives, and assumptions remain connected and consistently interpreted across teams and time.

Isn’t alignment a leadership responsibility?

Yes. LLMs don’t replace leadership — they provide the infrastructure that helps leadership scale alignment.

Can LLMs introduce bias into strategy?

Only if misused. In Priowise, LLMs reflect existing inputs rather than generating independent strategic direction.

Why is alignment more important than speed?

Because misaligned speed creates rework, conflict, and execution failure. Alignment ensures progress compounds.